The Hidden Risks of Enterprise GenerativeAI Security in: Compliance & Hallucinations

Author: Jerry Papadatos, Catherene Joshi

- April 06, 2026

- 5 Mins read

Share us on:

Enterprise Generative AI Security (GenAI) is no longer experimental. It is operational. From automated code generation and intelligent document processing to customer service copilots and decision-support systems, enterprises are embedding GenAI across the entire service lifecycle. In modern AI consulting environments, GenAI spans everything from requirement analysis and architecture design to deployment automation, monitoring, and continuous optimization.

However, as adoption accelerates, so do the risks. Many of which remain underestimated or misunderstood.

The Adoption vs. Governance Gap

A 2025 study by McKinsey & Company reveals that 88% of organizations are already using AI in at least one business function. Yet, research from IBM shows that only 24% have mature AI risk governance frameworks in place. This gap is alarming. It indicates that most enterprises are deploying enterprise GenerativeAI security systems without fully understanding:

- How models behave under adversarial conditions

- What data is being exposed or learned

- How outputs are validated before reaching users

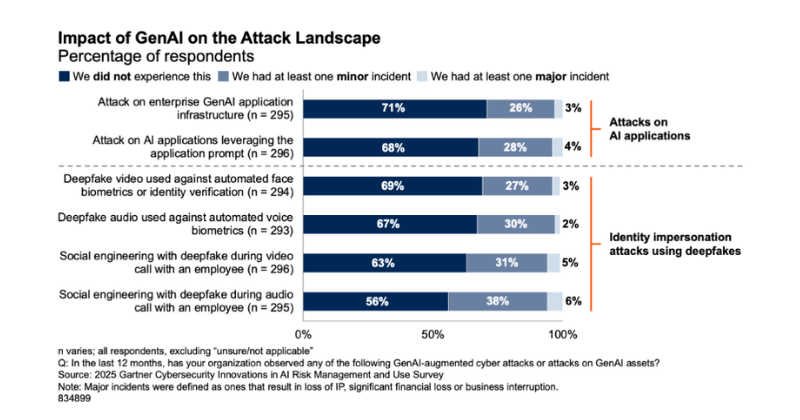

Further insights from Gartner highlight the growing threat landscape:

- Nearly 30% of organizations reported attacks on GenAI infrastructure

- 62% experienced deepfake-driven social engineering attacks

- Over 30% faced prompt-based attacks manipulating AI outputs

Source: Gartner (September 2025)

Additionally, IBM (via Ponemon Institute data) reports that 20% of organizations suffered breaches due to shadow AI, increasing breach costs by approximately $670,000 on average. These incidents frequently exposed sensitive data, including PII (65%) and intellectual property (40%).

Understanding the OWASP Top 10 for LLM Security

To address these emerging risks, the OWASP has introduced a Top 10 framework specifically for LLM and GenAI security.

Here are a few that show up most often in real-world environments:

Prompt Injection

This is one of the easiest ways to manipulate an AI system. Attackers craft inputs that override instructions and force the model to behave in unintended ways.

Sensitive Information Disclosure

Models can leak confidential data either from training data, or from connected systems especially when guardrails are weak.

Supply Chain

AI systems rely on multiple tools and integrations. A vulnerability in any one component can create entry points. We’ve already seen cases (like litellm PyPI supply chain attack) that accidently gave access to credentials and secrets. Simple practices like key rotation and access control go a long way here.

Improper Output Handling

Unvalidated AI outputs can lead to downstream vulnerabilities, especially when integrated into applications or workflows.

Vector and Embedding Weaknesses

If your system relies on embeddings or vector databases, attackers can poison data sources to influence responses.

Misinformation

This is less about attackers and more about the nature of GenAI itself. Models can sound confident even when they’re wrong and that can lead to bad decisions.

Unbounded Consumption

Without limits, GenAI systems can be abused leading to high costs, performance issues, or even denial-of-service scenarios.

Mitigation Strategies: Building Secure GenAI Systems

Enterprises must move from experimentation to secure, governed AI deployment. Below are key strategies:

01. Grounding with RAG (Retrieval-Augmented Generation)

Anchoring model responses to trusted enterprise data reduces hallucinations and improves accuracy.

02. Fine-Tuning

Customizing models on domain-specific datasets ensures relevance and controlled behavior.

03. Automated Validation Mechanisms

Implement output validation layers to detect anomalies, bias, or unsafe responses before delivery.

04. Secure Development Practices

Adopt secure coding standards aligned with OWASP ASVS, combined with a Zero Trust architecture. One must never assume inputs or systems are safe.

05. Logging, Monitoring & Anomaly Detection

Comprehensive observability is critical to detect misuse, prompt attacks, or abnormal behavior in real time.

06. Adversarial Robustness Training

Train models to withstand malicious prompts and adversarial inputs.

07. Graceful Degradation

Ensure systems fail safely returning controlled responses instead of exposing sensitive logic or data.

08. Input Validation & Secure Data Access

Use strict input validation and enforce parameterized queries or prepared statements for database interactions.

09. Guardrails

Deploy policy enforcement layers to restrict unsafe outputs and enforce compliance requirements.

10. Data Validation & Source Authentication

Ensure data integrity through validation, classification, and verification of trusted sources—especially in multi-system environments.

Turning Risk into Competitive Advantage

At Nallas, we believe that security is not an afterthought. It is embedded into every stage of the GenAI lifecycle.

From design and development to deployment and continuous monitoring, we integrate:

- Secure AI architecture patterns

- Compliance-driven design

- Real-time risk detection frameworks

- Enterprise-grade governance models

Our approach ensures that organizations can leverage GenAI confidently without compromising security, compliance, or trust.

Jerry Papadatos

Director - Sales

Catherene Joshi

Lead Strategy